library(tidyverse)

library(broom)

library(stargazer)

library(skimr)

library(margins)

library(yardstick)

library(WVPlots)

library(pROC)

library(glmnet)

library(gamlr)

library(Matrix)

library(DT)

library(rmarkdown)

library(hrbrthemes)

library(ggthemes)

theme_set(

theme_ipsum()

)

scale_colour_discrete <- function(...) scale_color_colorblind(...)

scale_fill_discrete <- function(...) scale_fill_colorblind(...)Regularized Linear Regression

Online Shopping

Setup

Load Data

url <- "https://bcdanl.github.io/data/browser-online-shopping.zip"

dest <- file.path(tempdir(), "browser-online-shopping.zip")

download.file(url, destfile = dest, mode = "wb")

df <- readr::read_csv(dest)Model

- The browser dataset contains web browsing logs for 10,000 households.

- The browser dataset include a year’s worth of their browser logs for the 1,000 most heavily trafficked websites

- Each browser in the sample spent at least $1 online in the same year.

\[ \log(\text{spend}_{i}) = \beta_0 + \beta_1 X_{1,i} +\,\cdots\,+ \beta_{1000} X_{1000,i} + \epsilon_i \]

- \(\text{spend}_{i}\): household \(i\)’s amount of dollars spent on online shopping

- \(X_{p, i}\) household \(i\)’s percentage of visiting the \(p\) website

Checking Data

df |>

transmute(row_sum = rowSums(across(-1))) |>

skim()| Name | transmute(df, row_sum = r… |

| Number of rows | 10000 |

| Number of columns | 1 |

| _______________________ | |

| Column type frequency: | |

| numeric | 1 |

| ________________________ | |

| Group variables | None |

Variable type: numeric

| skim_variable | n_missing | complete_rate | mean | sd | p0 | p25 | p50 | p75 | p100 | hist |

|---|---|---|---|---|---|---|---|---|---|---|

| row_sum | 0 | 1 | 100 | 0 | 100 | 100 | 100 | 100 | 100 | ▁▂▇▂▁ |

Train-Test Split

set.seed(1)

df <- df |>

mutate(rnd = runif(n()))

dtrain <- df |>

filter(rnd > .2) |>

select(-rnd)

dtest <- df |>

filter(rnd <= .2) |>

select(-rnd)

X_train <- dtrain |> select(-spend) |> as.matrix()

X_test <- dtest |> select(-spend) |> as.matrix()

y_train <- log(dtrain$spend)

y_test <- log(dtest$spend)

# Predictor matrices (glmnet requires matrix input)

# X_train <- train |> select(-spend) |> as.matrix() |> Matrix(sparse = TRUE)

# X_test <- test |> select(-spend) |> as.matrix() |> Matrix(sparse = TRUE)Linear Regression

model <- lm(log(spend) ~ ., data = dtrain)beta_linear <- model |>

tidy() |>

mutate(

stars = case_when(

p.value < .01 ~ "***", # smallest p-values first

p.value < .05 ~ "**",

p.value < .1 ~ "*",

TRUE ~ ""

)

) |>

select(term, estimate, stars) |>

rename(beta = estimate)

model |>

glance()# A tibble: 1 × 12

r.squared adj.r.squared sigma statistic p.value df logLik AIC BIC

<dbl> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl>

1 0.276 0.172 1.52 2.66 9.82e-118 999 -14120. 30242. 37235.

# ℹ 3 more variables: deviance <dbl>, df.residual <int>, nobs <int>Lasso Linear Regression

set.seed(1)

model_lasso <- cv.glmnet(

x = X_train,

y = y_train,

alpha = 1,

nfolds = 5, # 10 is default

intercept = FALSE # TRUE is default

)CVErrors_lasso <- model_lasso |> tidy()

CVErrors_lasso |>

paged_table()model_lasso |>

glance() |>

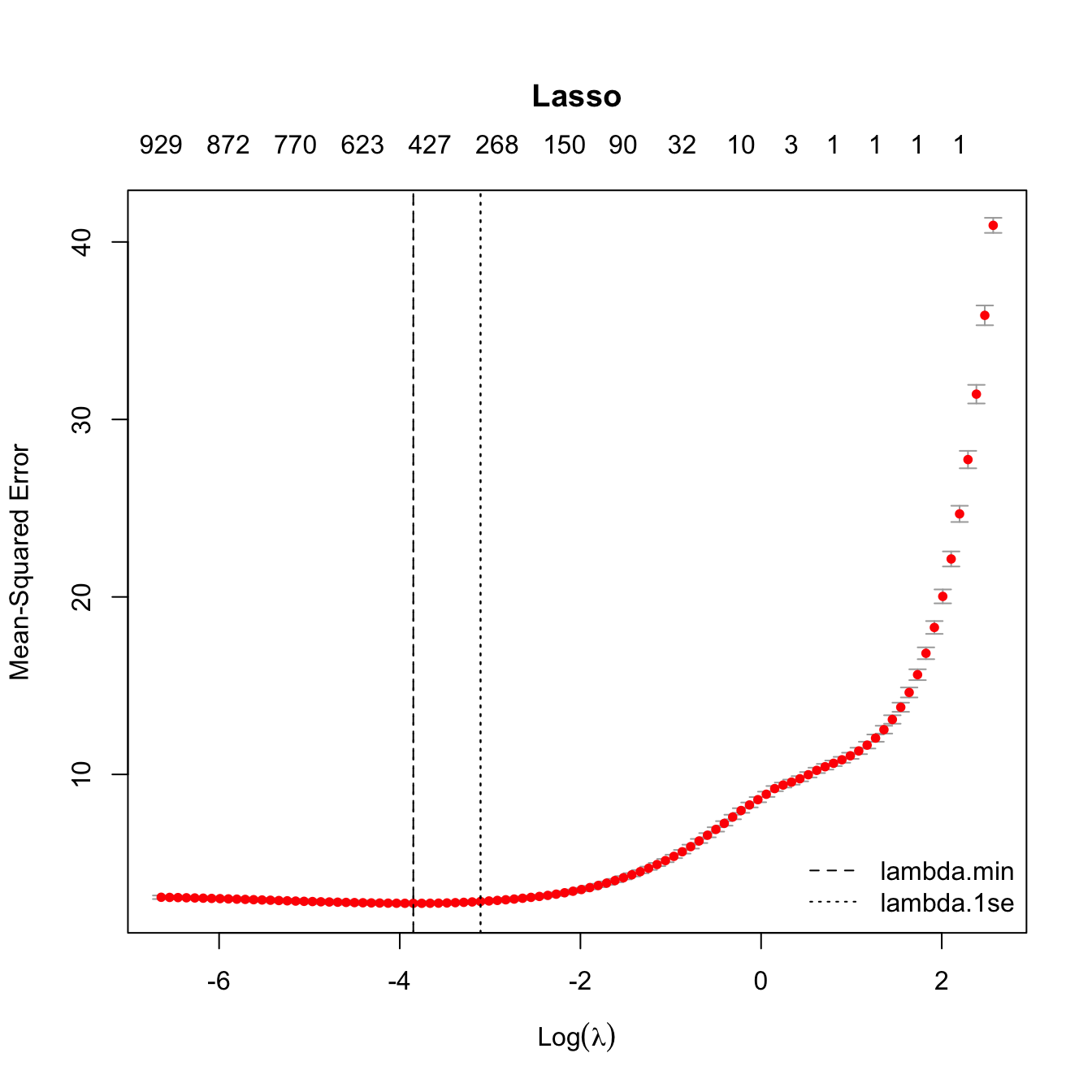

paged_table()Lasso CV Curve

# Run all at once

par(mar = c(5.1, 4.1, 6.1, 2.1)) # title margin

plot(model_lasso, main = "Lasso")

abline(v = log(model_lasso$lambda.min), lty = 2)

abline(v = log(model_lasso$lambda.1se), lty = 3)

legend("bottomright",

legend = c("lambda.min", "lambda.1se"),

lty = c(2, 3),

bty = "n")

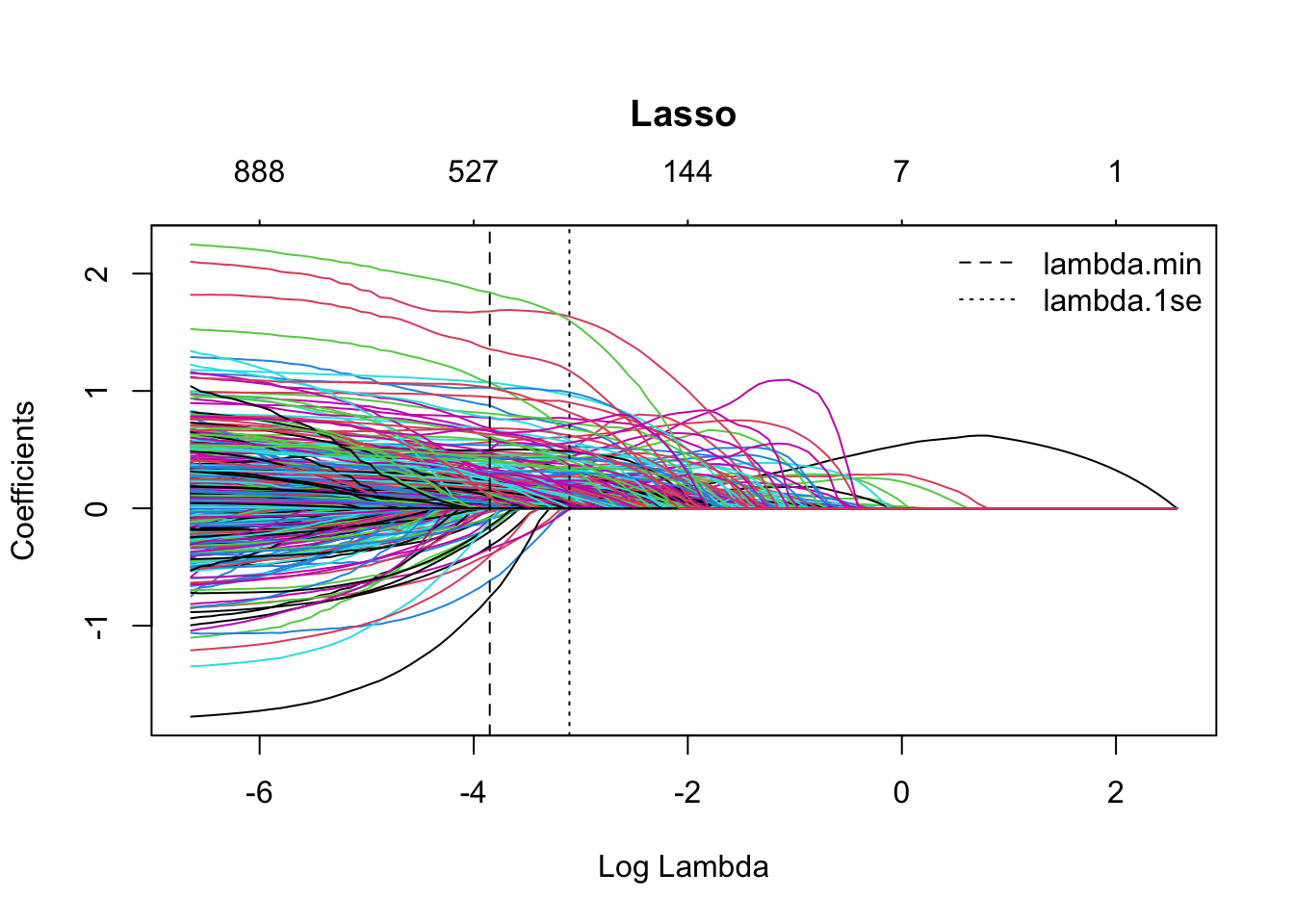

Lasso Coefficient Path

# Run all at once

par(mar = c(5.1, 4.1, 6.1, 2.1)) # title margin

plot(model_lasso$glmnet.fit, xvar = "lambda",

main = "Lasso")

abline(v = log(model_lasso$lambda.min), lty = 2)

abline(v = log(model_lasso$lambda.1se), lty = 3)

legend("topright",

legend = c("lambda.min", "lambda.1se"),

lty = c(2, 3),

bty = "n")

Lasso Coefficients

# Lasso

beta_lasso_1se <- coef(model_lasso,

s = "lambda.1se") # default

beta_lasso_min <- coef(model_lasso,

s = "lambda.min")

betas <- data.frame(

term = rownames(beta_lasso_1se),

beta_lasso_1se = as.numeric(beta_lasso_1se),

beta_lasso_min = as.numeric(beta_lasso_min)

)

betas <- betas |>

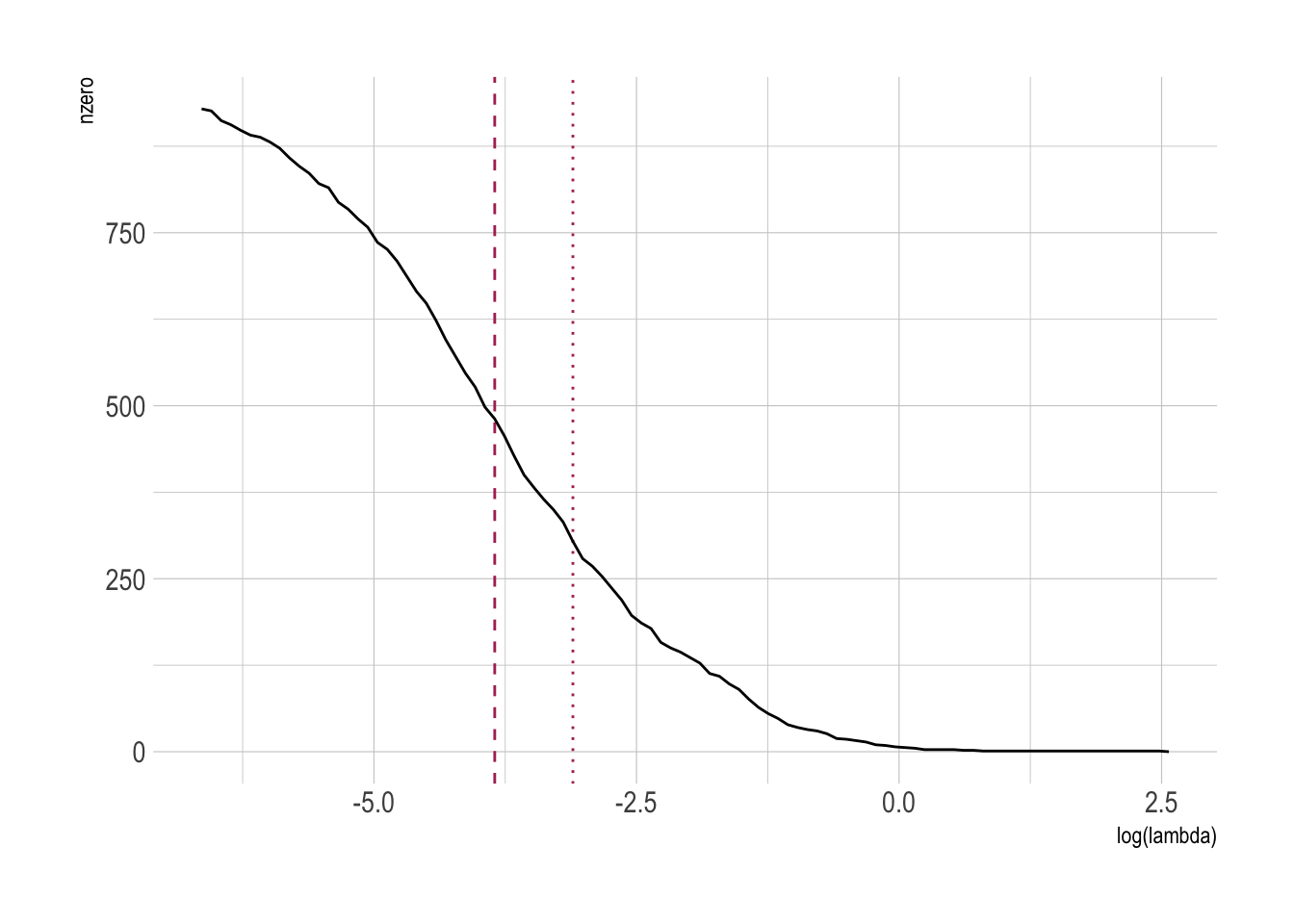

left_join(beta_linear)Number of Non-zero Betas

# Show only nonzero in either solution

betas_lasso_nz <- betas |>

filter(beta_lasso_1se != 0 | beta_lasso_min != 0) |>

arrange(abs(beta_lasso_1se), abs(beta_lasso_min))

nrow(betas_lasso_nz)[1] 485CVErrors_lasso |>

ggplot(aes(x = log(lambda),

y = nzero)) +

geom_vline(xintercept = log(model_lasso$lambda.min),

color = 'maroon', lty = 2) +

geom_vline(xintercept = log(model_lasso$lambda.1se),

color = 'maroon', lty = 3) +

geom_line()

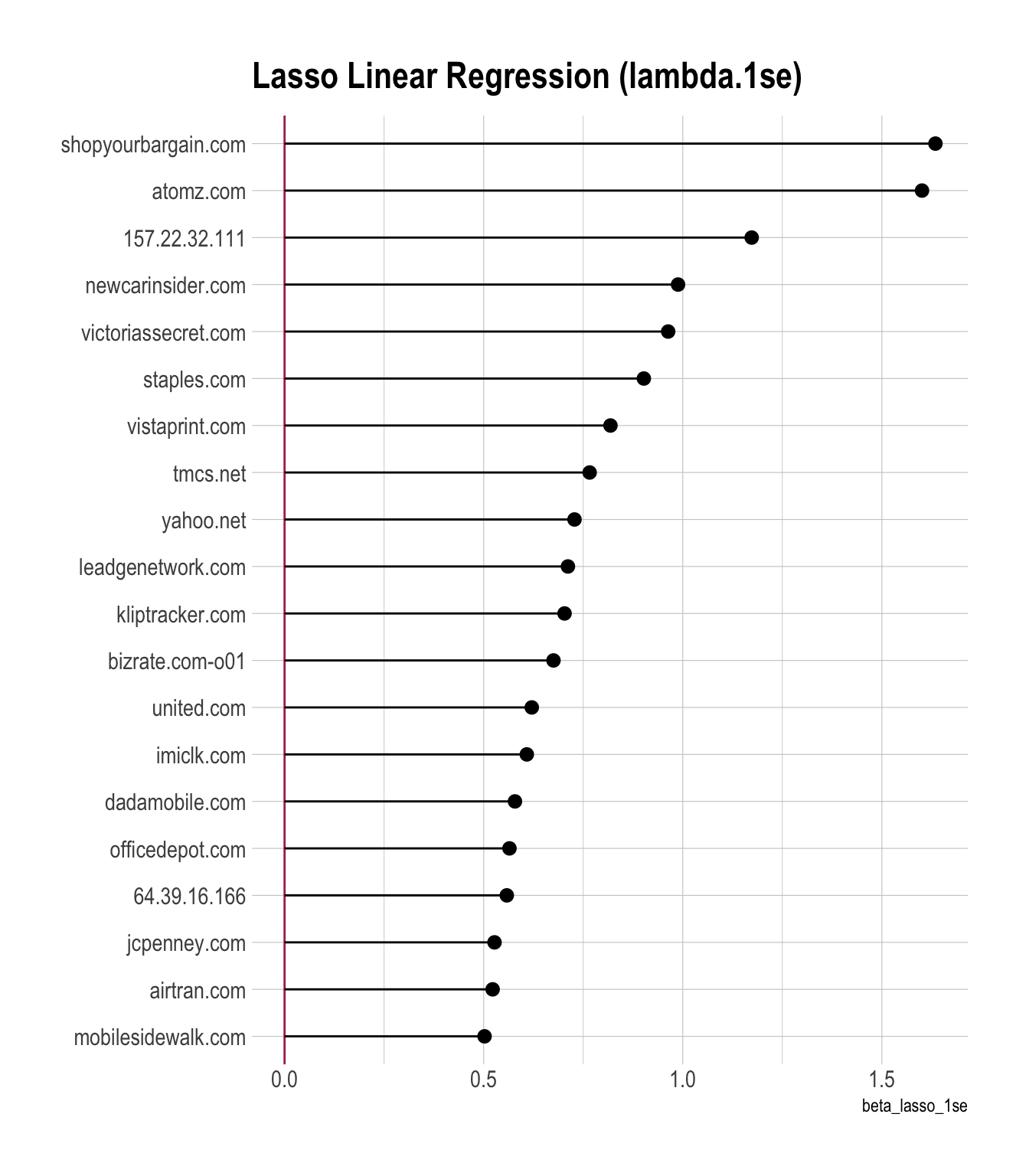

Coefficient Plots

Top 20

betas |>

arrange(-beta_lasso_1se) |>

head(20) |>

ggplot(aes(x = beta_lasso_1se,

y = fct_reorder(term, beta_lasso_1se)

)

) +

geom_vline(xintercept = 0,

color = 'maroon') +

geom_pointrange(

aes(xmin = 0,

xmax = beta_lasso_1se

)

) +

labs(y = NULL,

title = "Lasso Linear Regression (lambda.1se)")

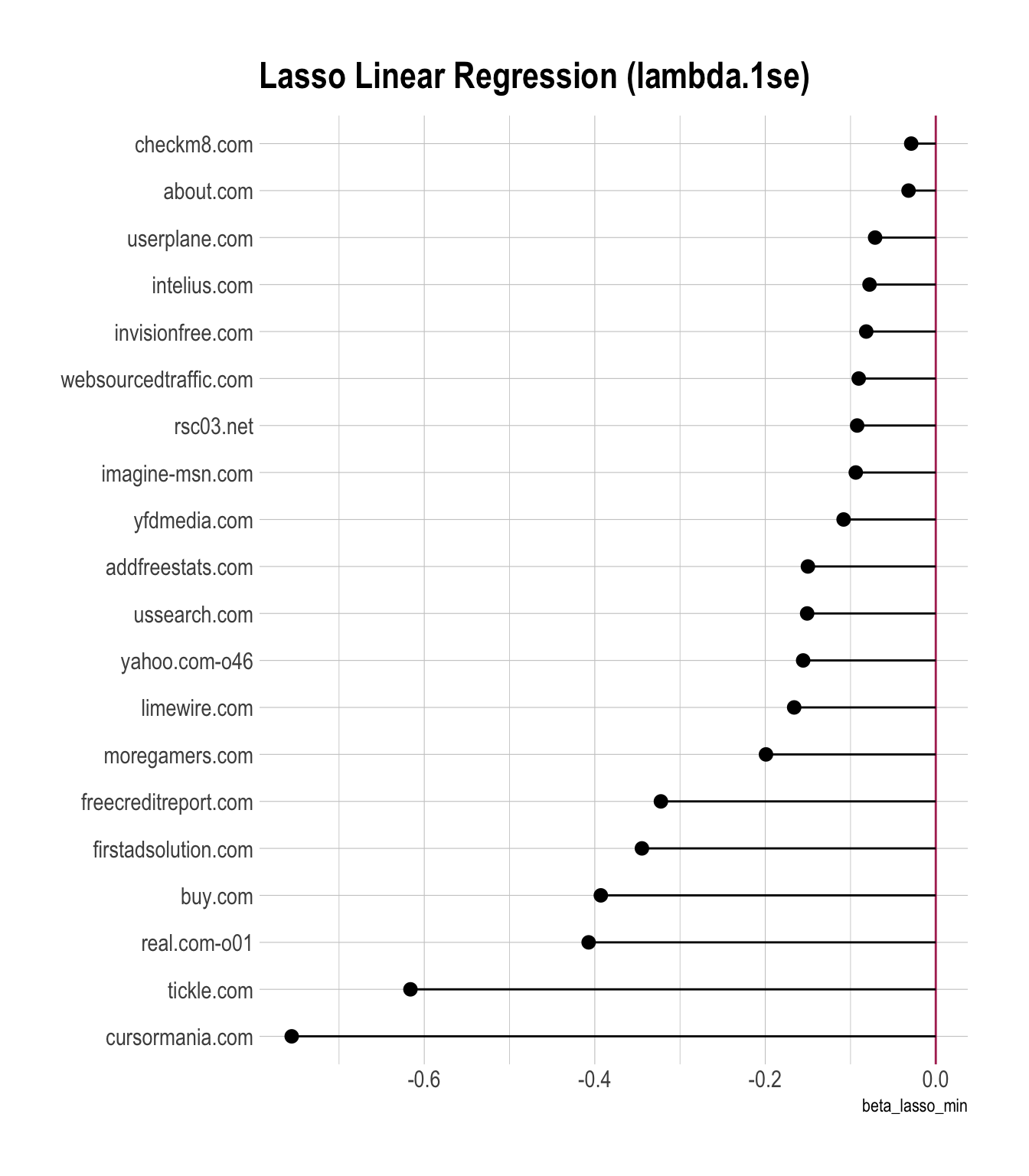

Worst 20

betas |>

filter(beta_lasso_min != 0) |>

arrange(beta_lasso_min) |>

head(20) |>

ggplot(aes(x = beta_lasso_min,

y = fct_reorder(term, beta_lasso_min)

)

) +

geom_vline(xintercept = 0,

color = 'maroon') +

geom_pointrange(

aes(xmin = 0,

xmax = beta_lasso_min

)

) +

labs(y = NULL,

title = "Lasso Linear Regression (lambda.1se)")

Ridge Linear Regression

set.seed(1)

model_ridge <- cv.glmnet(

x = X_train,

y = y_train,

alpha = 0,

nfolds = 5, # 10 is default

intercept = FALSE # TRUE is default

)CVErrors_ridge <- model_ridge |> tidy()

CVErrors_ridge |>

paged_table()model_ridge |>

glance() |>

paged_table()Ridge Coefficients

# ridge

beta_ridge_1se <- coef(model_ridge,

s = "lambda.1se") # default

beta_ridge_min <- coef(model_ridge,

s = "lambda.min")

betas_ridge <- data.frame(

term = rownames(beta_ridge_1se),

beta_ridge_1se = as.numeric(beta_ridge_1se),

beta_ridge_min = as.numeric(beta_ridge_min)

)

betas <- betas |>

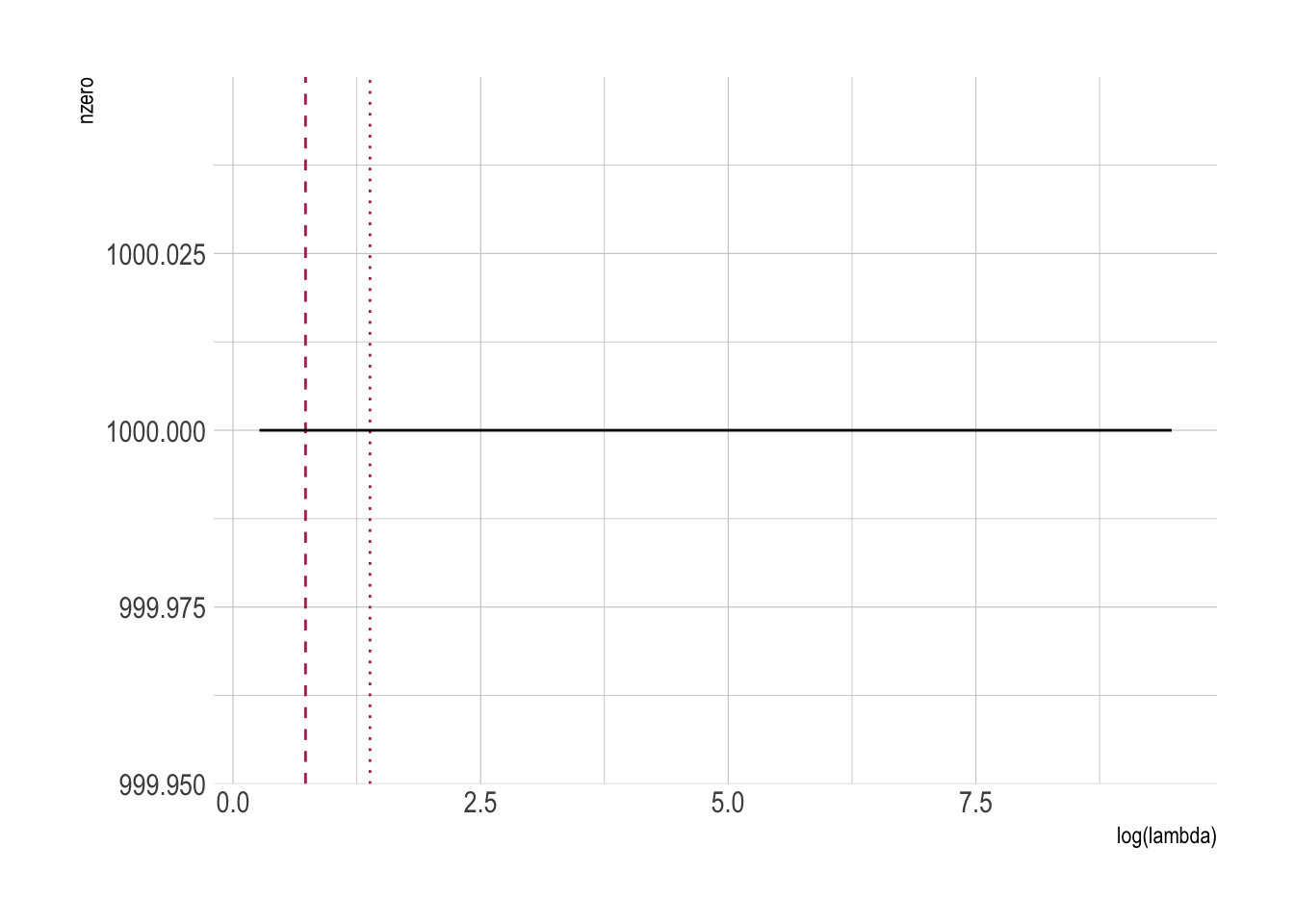

left_join(betas_ridge)Number of Non-zero Betas

# Show only nonzero in either solution

betas_ridge_nz <- betas_ridge |>

filter(beta_ridge_1se != 0 | beta_ridge_min != 0) |>

arrange(abs(beta_ridge_1se), abs(beta_ridge_min))

nrow(betas_ridge_nz)[1] 1000CVErrors_ridge |>

ggplot(aes(x = log(lambda),

y = nzero)) +

geom_vline(xintercept = log(model_ridge$lambda.min),

color = 'maroon', lty = 2) +

geom_vline(xintercept = log(model_ridge$lambda.1se),

color = 'maroon', lty = 3) +

geom_line()

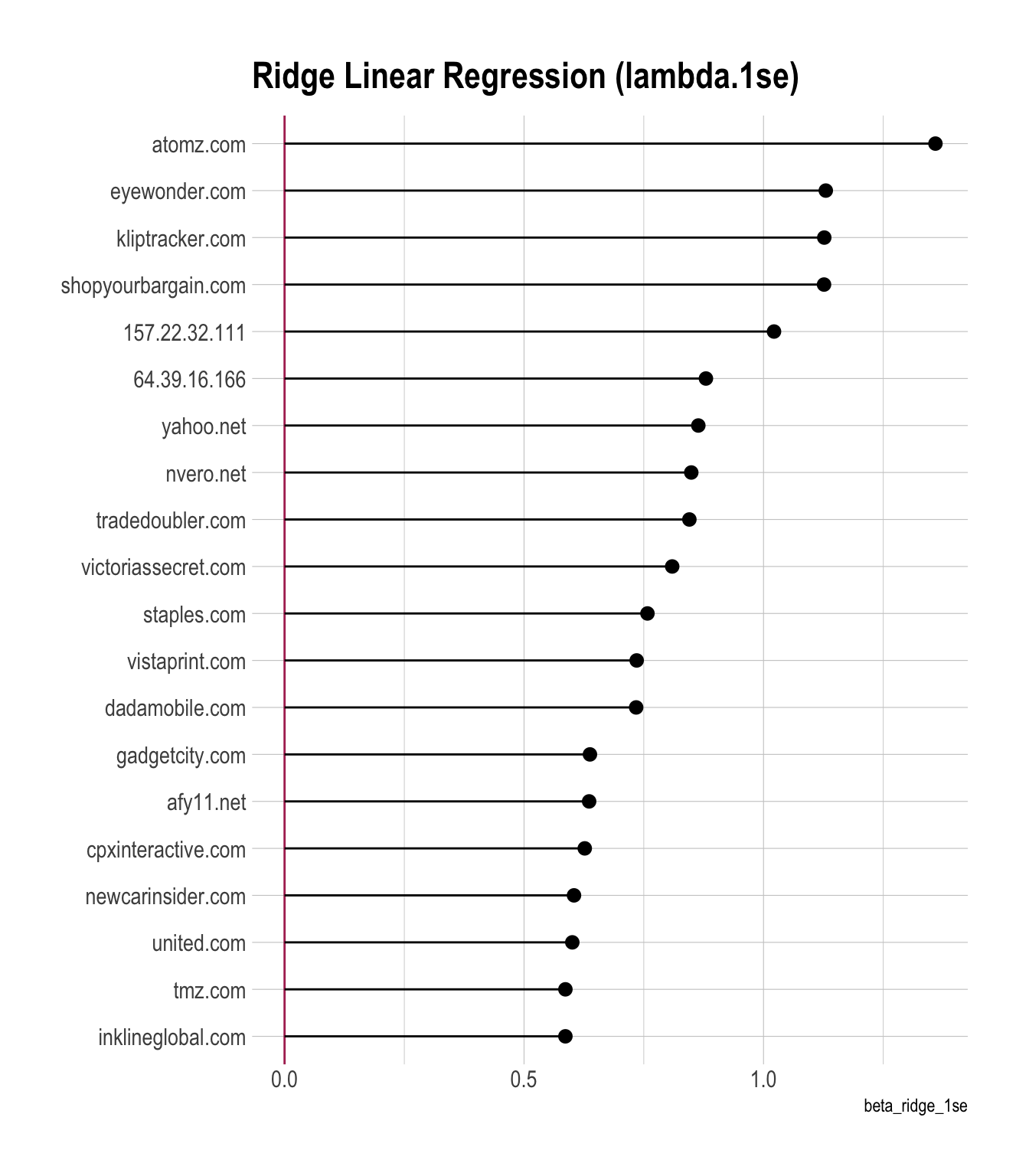

Coefficient Plots

Top 20

betas |>

arrange(-beta_ridge_1se) |>

head(20) |>

ggplot(aes(x = beta_ridge_1se,

y = fct_reorder(term, beta_ridge_1se)

)

) +

geom_vline(xintercept = 0,

color = 'maroon') +

geom_pointrange(

aes(xmin = 0,

xmax = beta_ridge_1se

)

) +

labs(y = NULL,

title = "Ridge Linear Regression (lambda.1se)")

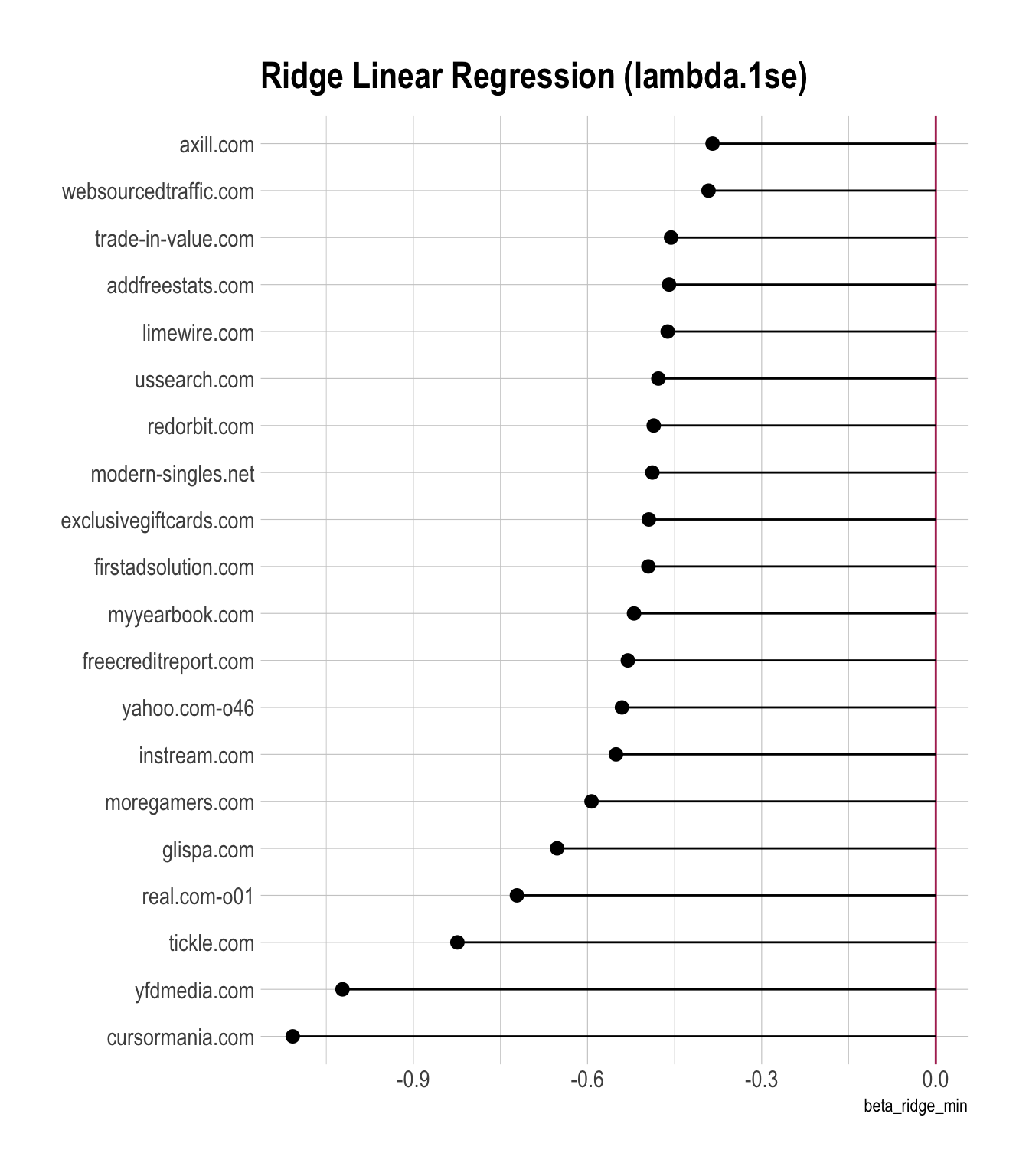

Worst 20

betas |>

filter(beta_ridge_min != 0) |>

arrange(beta_ridge_min) |>

head(20) |>

ggplot(aes(x = beta_ridge_min,

y = fct_reorder(term, beta_ridge_min)

)

) +

geom_vline(xintercept = 0,

color = 'maroon') +

geom_pointrange(

aes(xmin = 0,

xmax = beta_ridge_min

)

) +

labs(y = NULL,

title = "Ridge Linear Regression (lambda.1se)")

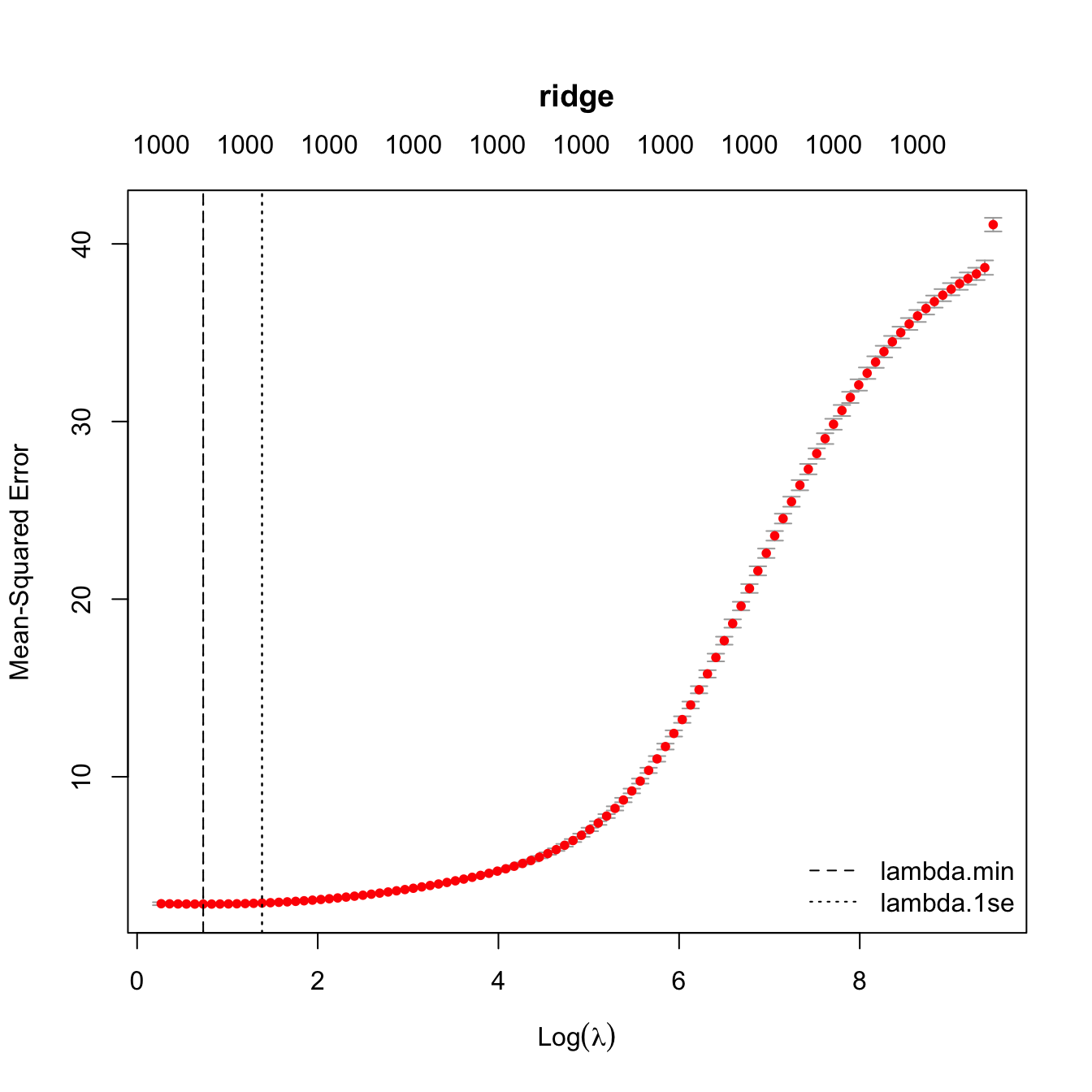

Ridge CV Curve

# Run all at once

par(mar = c(5.1, 4.1, 6.1, 2.1)) # title margin

plot(model_ridge, main = "ridge")

abline(v = log(model_ridge$lambda.min), lty = 2)

abline(v = log(model_ridge$lambda.1se), lty = 3)

legend("bottomright",

legend = c("lambda.min", "lambda.1se"),

lty = c(2, 3),

bty = "n")

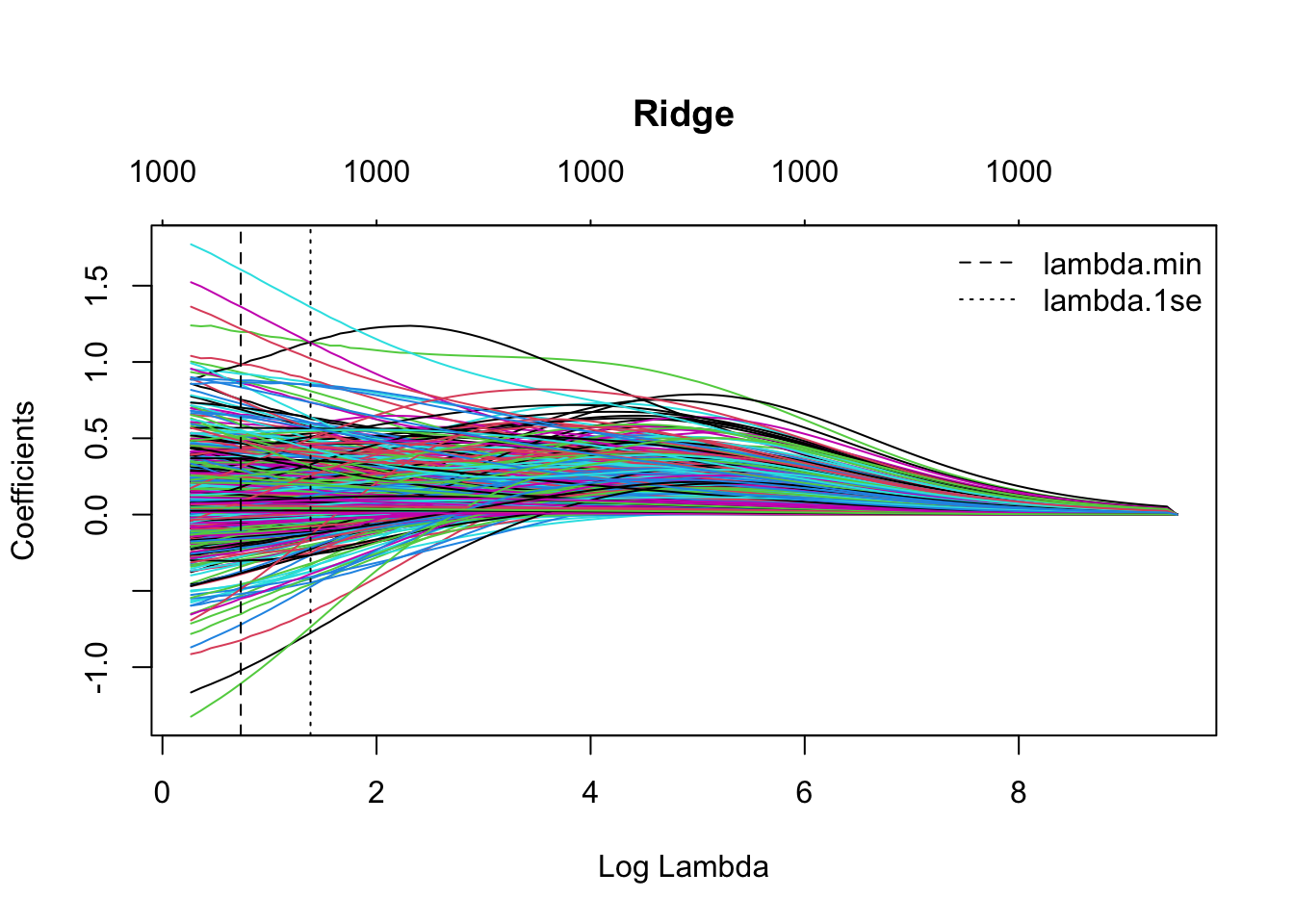

Ridge Coefficient Path

# Run all at once

par(mar = c(5.1, 4.1, 6.1, 2.1)) # title margin

plot(model_ridge$glmnet.fit, xvar = "lambda",

main = "Ridge")

abline(v = log(model_ridge$lambda.min), lty = 2)

abline(v = log(model_ridge$lambda.1se), lty = 3)

legend("topright",

legend = c("lambda.min", "lambda.1se"),

lty = c(2, 3),

bty = "n")

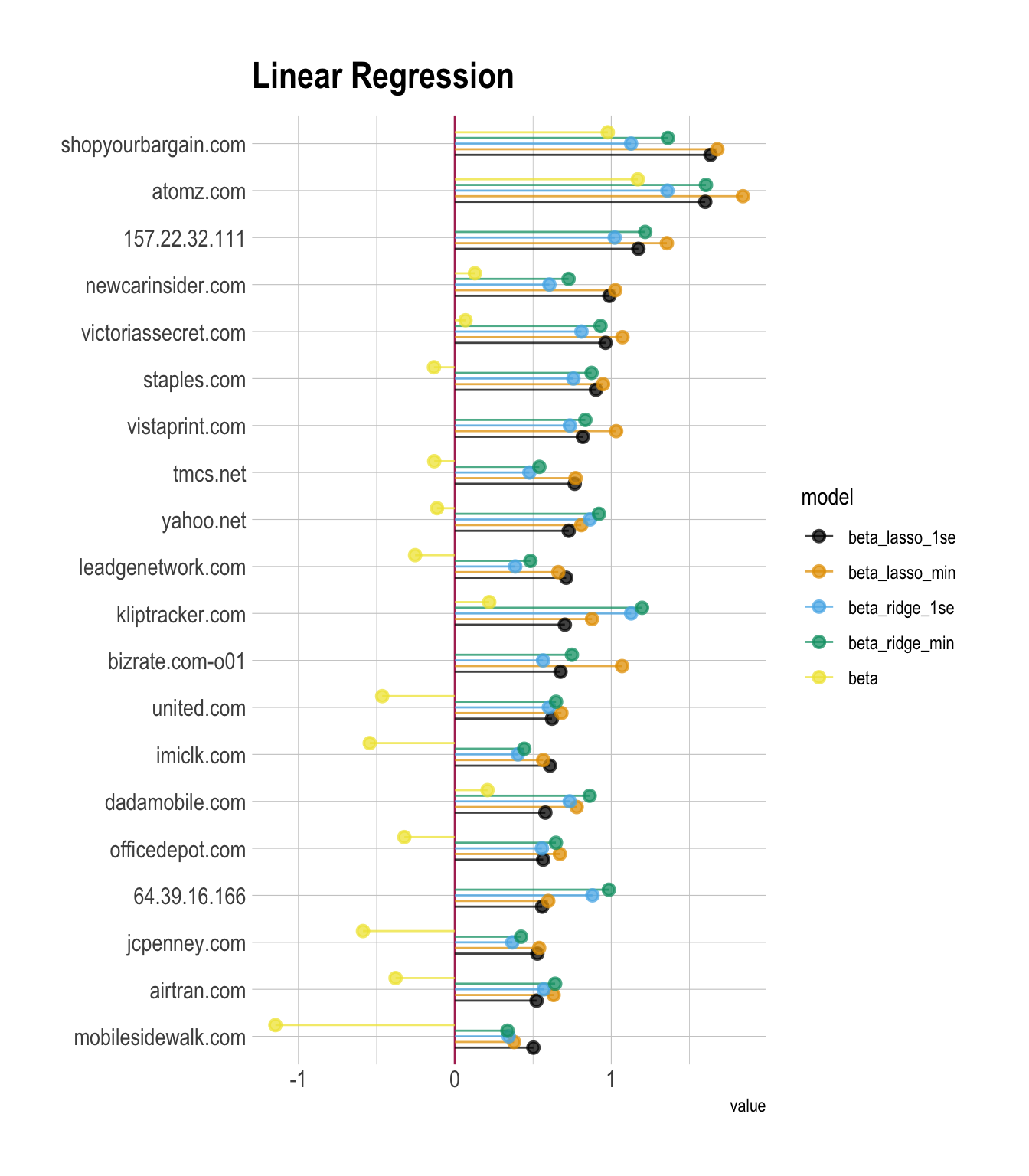

Coefficient Plots for All Models

Top 20

betas |>

relocate(stars, beta, .after = term) |>

arrange(-beta_lasso_1se) |>

head(20) |>

mutate(term = factor(term),

term = fct_reorder(term, beta_lasso_1se)) |>

pivot_longer(cols = beta:beta_ridge_min,

names_to = "model",

values_to = "value") |>

mutate(model = factor(model,

levels = c("beta_lasso_1se",

"beta_lasso_min",

"beta_ridge_1se",

"beta_ridge_min",

"beta"))) |>

ggplot(aes(x = value, y = term, color = model)) +

geom_vline(xintercept = 0,

color = 'maroon') +

geom_pointrange(aes(xmin = 0, xmax = value),

alpha = .75,

position = position_dodge(width = 0.6)) +

labs(y = NULL,

title = "Linear Regression")

Prediction & RMSE on Test Set

dtest_pred <- dtest |>

mutate(

.fitted = predict(model, newdata = dtest),

.fitted_lasso_1se = predict(model_lasso, newx = X_test,

s = "lambda.1se"),

.fitted_lasso_min = predict(model_lasso, newx = X_test,

s = "lambda.min"),

.fitted_ridge_1se = predict(model_ridge, newx = X_test,

s = "lambda.1se"),

.fitted_ridge_min = predict(model_ridge, newx = X_test,

s = "lambda.min")

) |>

select(spend, starts_with(".fitted"))

dtest_RMSE <- dtest_pred |>

mutate(resid_sq = ( log(spend) - .fitted )^2,

resid_lasso_1se_sq = ( log(spend) - .fitted_lasso_1se )^2,

resid_lasso_min_sq = ( log(spend) - .fitted_lasso_min )^2,

resid_ridge_1se_sq = ( log(spend) - .fitted_ridge_1se )^2,

resid_ridge_min_sq = ( log(spend) - .fitted_ridge_min )^2,

) |>

summarise(

RMSE = sqrt( mean(resid_sq) ),

RMSE_lasso_1se = sqrt( mean(resid_lasso_1se_sq) ),

RMSE_lasso_min = sqrt( mean(resid_lasso_min_sq) ),

RMSE_ridge_1se = sqrt( mean(resid_ridge_1se_sq) ),

RMSE_ridge_min = sqrt( mean(resid_ridge_min_sq) )

)

dtest_RMSE |>

paged_table()